Table of contents

- Why do we need Reverse Proxy?

- How to Install NGINX?

- NGINX as Static File Server

- How to configure NGINX to serve assets?

- nginx.conf

- Define the maximum number of connections

- Set MIME types,

- nginx is smart enough to understand the type of html file but for

- other file types we will includes the mime.types from /etc/nginx/

- Default server configuration

- Listen on port 80

- Server name

- Location for serving static assets

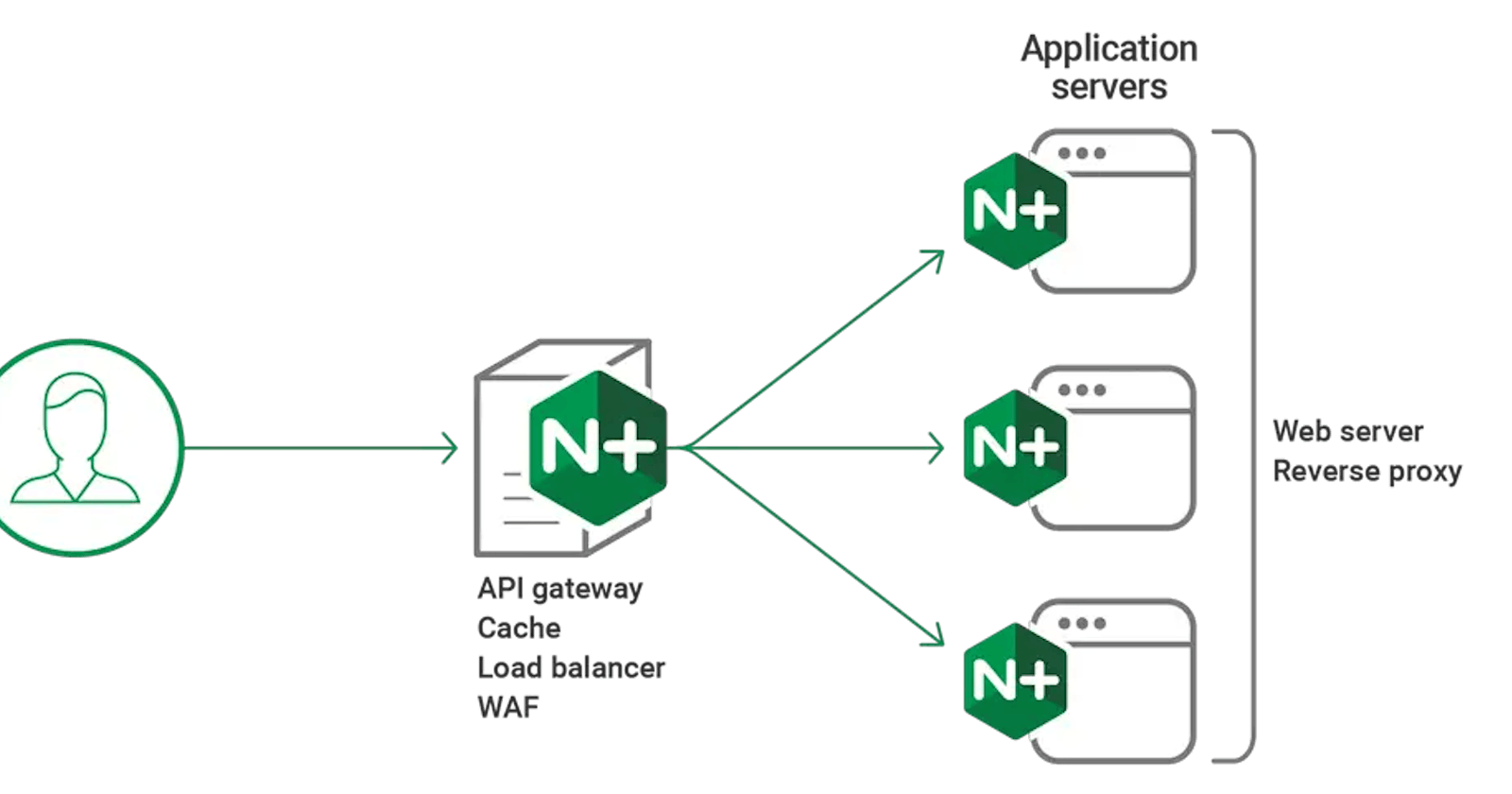

In today's world of modern web applications and microservices architectures, the concept of a reverse proxy has become increasingly important. A reverse proxy acts as an intermediary between clients and backend servers, providing a single entry point for incoming requests. This not only simplifies load balancing and improves overall system performance but also enhances security by hiding the identities and details of the backend servers from the public internet. One of the most popular and widely used reverse proxy solutions is NGINX, an open-source software that combines high performance, reliability, and versatility.

Why do we need Reverse Proxy?

We need a Reverse Proxy for several reasons, including:

Load Balancing: A Reverse Proxy acts as a Load Balancer, distributing incoming requests across multiple backend servers. This helps balance the workload, ensuring that no single server becomes overwhelmed, and improving the overall performance and availability of the system.

Traffic Management: By acting as a single entry point for incoming requests, a Reverse Proxy helps reduce the risk of high traffic on any individual backend server. It can handle up to 10 thousand concurrent connections and distribute them across the available servers, preventing any one server from becoming overwhelmed.

Caching: Reverse Proxies can cache frequently accessed content, such as static files or common responses, reducing the load on the backend servers. When a client requests cached content, the Reverse Proxy can serve it directly, without forwarding the request to the backend servers, resulting in faster response times and better resource utilization.

Security: Reverse Proxies can enhance security by acting as a barrier between the public internet and the internal backend servers. They can implement additional security measures, such as SSL/TLS termination, access control, and rate limiting, protecting the backend servers from direct exposure and potential attacks.

Compression and Optimization: Reverse Proxies can compress and optimize content before serving it to clients, reducing the amount of data transmitted and improving overall performance, especially for clients with limited bandwidth or slower network connections.

How to Install NGINX?

So currently we are going to work with docker to run the NGINX server in the containerized environment so that we can get rid of the overhead of installing the actual NGINX server on our computer.So basic prerequisites would be docker installed in your system so we can go further.

Open the terminal and type the following commands

docker run -it -p 8080:80 ubuntu

The above command will pull the ubuntu image from the docker hub and map the port 80 of that container to our local computer's port 8080.

apt-get update

This command will update the package lists and repositories for the Ubuntu image in which you are currently working.

apt-get install nginx

This command will install the NGINX web server in our container.

After this you have to run the nginx by running the command in the terminal

nginx

Now to check whether the nginx is running correctly try to open the port 8080 in the local.

NGINX as Static File Server

The only thing you have to change to make things work as per is you have to change the nginx.conf file which present in the /etc/nginx/ .

So to give you a brief with some hands-on work let's start some practical thing.

Go to the /etc/nginx/ folder and create an assets folder in it and write a simple index.html file and some style.css

mkdir assets

touch index.html style.css

write some code in the assets files and then we will discuss, how to edit nginx.conf file to serve these assets correctly.

How to configure NGINX to serve assets?

The main crux of this blog present here , so we have to change the configure file to make the nginx to serve the assets file.To make the things work from the first principles let's write nginx.conf file from the scratch.

Certainly! Let's start by creating a new nginx.conf file from scratch. We'll back up the existing configuration file first and then proceed to write the new configuration.

Here are the steps to achieve this:

Navigate to the

/etc/nginx/directory from the root.cd /etc/nginx/Create a backup of the existing

nginx.conffile.cp nginx.conf nginx-backup.confNow, let's create a new

nginx.conffile and write the configuration from scratch.nano nginx.confInside the editor, we'll write the new configuration. Below is a basic example of an

nginx.conffile to serve static assets:```nginx

nginx.conf

events {

Define the maximum number of connections

worker_connections 1024; }

http {

Set MIME types,

nginx is smart enough to understand the type of html file but for

other file types we will includes the mime.types from /etc/nginx/

include /etc/nginx/mime.types;

Default server configuration

server {

Listen on port 80

listen 80;

Server name

server_name localhost;

Location for serving static assets

root /etc/nginx/assets;

} }

In this configuration:

* Configure the `events` block to define the maximum number of connections.

* In the `http` block, set MIME types and define a default server configuration.

* The default server listens on port 80 and serves static assets from the `/assets` location.

* You need to replace `/path/to/your/assets` with the actual path where your static assets are located.

4. Once you've finished writing the configuration, save the changes and exit the editor.

In nano editor, you can do this by pressing `Ctrl + X`, then `Y` to confirm changes, and `Enter` to save.

5. After saving the `nginx.conf` file, you can reload the Nginx configuration for the changes to take effect.

```bash

nginx -s reload

With this new configuration, Nginx will serve static assets from the specified location (/path/to/your/assets) when accessed through the /assets endpoint. Adjust the configuration and paths according to your specific setup and requirements.

Now when you look at the endpoint you will find the static file served there.

I

As we wrap up our exploration of setting up an NGINX server in a Docker container, it's important to note that this is just the beginning of a journey filled with exciting possibilities. In the next installment of this series, we'll take our learnings a step further and delve into the world of NGINX reverse proxies.

Specifically, we'll dive into the process of configuring NGINX as a reverse proxy in front of an Amazon EC2 instance, leveraging its load balancing and caching capabilities to enhance the performance and scalability of your web applications. Additionally, we'll cover the integration of a real domain and the implementation of SSL/TLS encryption using a valid SSL certificate, ensuring secure communication between clients and your server.

The world of web development is ever-evolving, and staying up-to-date with the latest technologies and best practices is crucial for success. At the core of this journey lies a commitment to continuous learning and a passion for coding.

So, until we meet again for our next exciting tech blog, keep exploring, keep coding, and keep pushing the boundaries of what's possible.

We'll see you soon with more insights, tips, and real-world examples that will elevate your skills and empower you to build robust, efficient, and secure web applications. Until then, happy coding! 👨💻👨💻